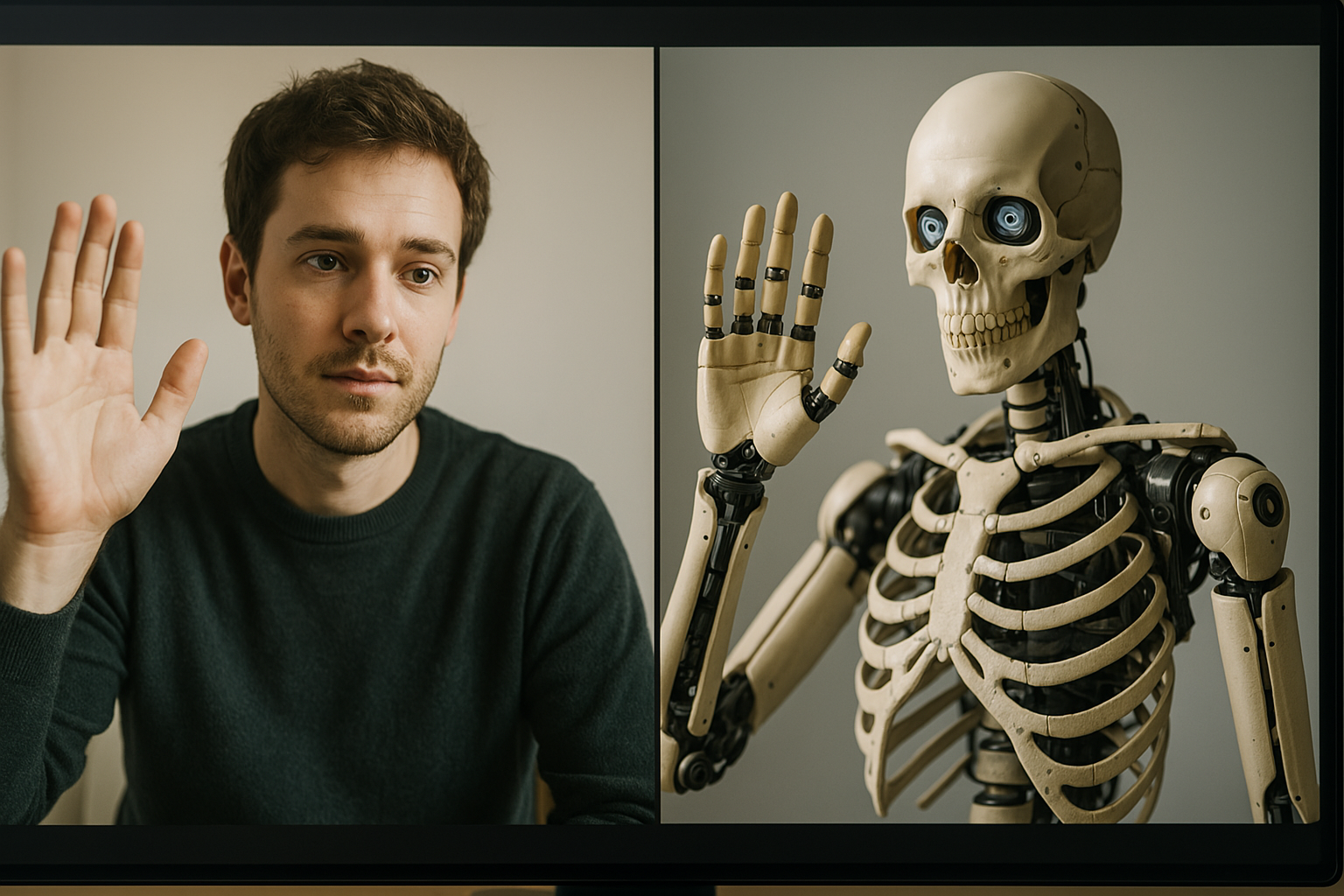

Have AI Chatbots finally beaten the Turing test?

In the study, 126 university students and 158 online participants were asked to chat with both an AI and a human—without knowing which was which.

For decades, the Turing test has been the gold standard for measuring artificial intelligence. Originally devised by British codebreaker Alan Turing in 1950, the idea is simple: if a human can’t reliably distinguish an AI’s responses from those of another human, then the AI has passed the test.

Now, in a study, researchers at the University of California San Diego (UCSD) claim that OpenAI’s ChatGPT-4.5 and Meta’s LLaMa-3.1 have done just that—convincing real people they’re human more often than not.

AI or Human? The Results Might Surprise You

In the study, 126 university students and 158 online participants were asked to chat with both an AI and a human—without knowing which was which. The AI models had just five minutes to convince participants they were the real deal.

The results? When ChatGPT-4.5 was specifically prompted to act human, it was mistaken for a real person 73% of the time—outperforming actual humans in the test! Meanwhile, Meta’s LLaMa-3.1 was chosen as human 56% of the time, also enough to claim victory in this classic test of intelligence.

Lead researcher Cameron Jones described the findings as “strong evidence” that modern AI models have truly reached a new level.

While these results are impressive, there’s a twist. The chatbots only passed when they were given a clear instruction beforehand to “act human.” Without this prompt, their performance dropped significantly—often because they would openly admit to being AI.

This has sparked debate. Can we really say AI has passed the Turing test if it needs a nudge?

Jones thinks so: “Without any prompt, LLMs would fail for trivial reasons… but they could easily be fine-tuned to behave as they do when prompted, so I do think it’s fair to say that LLMs pass.”

The Bigger Picture

The study highlights just how far AI has come in recent years. Just last year, OpenAI’s earlier models—ChatGPT-3.5 and ChatGPT-4—managed to fool humans only about 50-54% of the time. Now, with GPT-4.5 hitting 73%, it’s clear that AI is getting scarily good at mimicking us.

It’s a development that raises big questions about the future. If AI can already pass as human in casual conversation, what does this mean for trust, misinformation, and even human identity?

For those curious, you can try your own Turing test experiment at turingtest.live and see if you can spot the difference.

One thing’s for sure—Alan Turing’s 75-year-old challenge has never been more relevant.